For those of you who did not have an encounter with the now retired “Google Website Optimizer” or even the new “Google Experiments”, they are split testing tools which allow you to test two different web pages by alternately serving them to your visitors.

Google Website Optimizer

Website Optimizer, which was replaced by Google Content Experiments, allowed for A/B and multivariate testing. Multivariate testing allows you to test multiple aspects on one page. This meant that the tests conclusion would be based on all the goals of the website. For example lets say you want to test new copy and an image on a page that has two conversion goals on it: a free download and a price quote. The results of the test will identify the variation with elements which have a tendency to consistently produce the greatest increase in conversions. Website optimizer would do this by sending more or less equal amounts of visitors to each of the test pages.

Google Experiments

Then Along came Google Experiments which was integrated into Google Analytics. The main differences from its predecessor are that it no longer offers multivariate testing and the method in which it sends visitors to each of the test pages has been changed.

No more Multivariate Testing

Now that multivariate testing has been removed Google experiments will decide the results of the test based on a single conversion goal. This means that you have to be a bit more careful before choosing the winning variant since it may have adverse effects on other goals which were not factored into the test. The good news is that you can still monitor how the other goals were affected as a result of the test on your own. However the “winning variant” will be based only on the single goal chosen during the experiment setup.

Multi Armed Bandit Experiments

The method now used by Google Experiments to send traffic to your site is known as Multi Armed Bandit testing.

Wikipedia – In probability theory, the multi-armed bandit problem is the problem a gambler faces at a row of slot machines, sometimes known as “one-armed bandits”, when deciding which machines to play, how many times to play each machine and in which order to play them. When played, each machine provides a random reward from a distribution specific to that machine. The objective of the gambler is to maximize the sum of rewards earned through a sequence of lever pulls.

Google – The name “multi-armed bandit” describes a hypothetical experiment where you face several slot machines (“one-armed bandits”) with potentially different expected payouts. You want to find the slot machine with the best payout rate, but you also want to maximize your winnings. The fundamental tension is between “exploiting” arms that have performed well in the past and “exploring” new or seemingly inferior arms in case they might perform even better

Time is money and money is time, and according to Google this type of testing is designed to save you time. They claim that the test is statistically valid and will help you identify the best variant quickly. This in turn is said to help you implement that winning page so that you can begin to enjoy that higher conversion rate ASAP.

The way it works is as follows: twice per day Google looks at how the variations are performing and based on that they then adjust the traffic sent to each. A top performing page will get more visits while a page that is under-performing gets “throttled down”. As the experiment goes on Google claims that it gets wiser at identifying where the real payoffs are at.

Mixed reactions

When Google released its Content Experiments it got mixed reactions with some people upset by the lack of multivariate testing, as well as a few murmurings about the unequal traffic being sent to test pages because of the multi armed bandit method being employed.

One thing that troubled me I found in Google’s own FAQ

The bandit uses sequential Bayesian updating to learn from each day’s experimental results, which is a different notion of statistical validity than the one used by classical testing. A classical test starts by assuming a null hypothesis. For example, “The variations are all equally effective.” It then accumulates evidence about the hypothesis, and makes a judgement about whether it can be rejected. If you can reject the null hypothesis you’ve found a statistically significant result.

Instead of sending equal traffic to each of the test pages, the page which performs better will start to get more traffic. If you are running a test across pages which do not get huge amounts of traffic, or depending on the traffic blend they receive, one variant can run away while the other is left in the dust and doesn’t get a fair chance. For more on ‘traffic blends’ Will Critchlow wrote an interesting article about “why your CRO tests fail”.

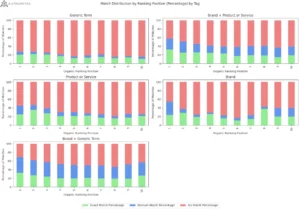

What aroused my interest in this subject was when I saw a number of experiments conducted by our clients which seemed to produce skewed results. An example of this is:

After 777 visits and 15 days the original page won but it received 742 visits vs the variant page which got only 35 visits. That’s a BIG difference!

Sure that is a ~58% vs. a 37% conversion rate but some of the results didn’t sit well with me, especially after the client implemented the “winning page” and in the real world it underperformed.

Final Thoughts

For tests with a lot more traffic this bandit method may seem sound. In the event that you do not have a lot of traffic and you receive results as seen above, I would recommend taking advice from Critchlow’s article and running multiple variants of the same tests and comparing the results. In some cases this may help avoid the situation which I described above where the runaway “winning page” was implemented and underperformed in real life.

Receiving a certain type traffic blend, good or bad could really skew the multi-armed bandit test more so than in a classic A/B Split test in my opinion. Don’t get me wrong I am not saying “don’t ever use Google Content Experiments”. I am just saying that you should stay alert for the possibility of getting skewed results.

Personally, i’m exploring other options which do not employ the multi armed bandit method, still allow multivariate testing and don’t have four figure and up monthly fees. Options such as Visual Website Optimizer, Unbounce and Convert.com.

Despite claims that MAB experiments can save you time and money… I personally feel more comfortable with classic A/B testing with the multivariate option available to me. What may save you some money in the short-term could end up costing you in the long run.